Imagine this scene: you wake up, walk to the bathroom and while you brush your teeth, your intelligent mirror projects the news of the day, “reads” your eyes with a camera, and mentions what you should eat that day to boost your immune system. After that, your digital assistant mentions your activities for the day, while playing music to cheer you up, because your wearables detected that your mood needed it. Your toilet also “reads” that you might need some iron (or recommends that you have a virtual visit with your doctor, and schedules a 5:30PM session with her while you drive back home).

This sounds like an episode of the Jetsons, but it is actually happening somewhere now, in 2021. We have the technology, we have the devices, and we have the data. This impressive disruption in which we are living is happening thanks to something that for some people, is sci-fi: Artificial Intelligence (AI).

AI is transforming the way we live. It is democratizing access to some basic rights like healthcare, and education; but it is leaving people behind at the same time.

What is AI?

Short answer: AI is a sophisticated version of statistics. The Oxford English Dictionary defines AI as “The capacity of computers or other machines to exhibit or simulate intelligent behavior; the field of study concerned with this.”1 Basically, AI algorithms enable models to behave intelligently, predicting events, for example: bank customers defaulting, cancerous tumors, forecasting sales, identifying objects and “speaking” human language. AI is a game-changing technology, but the truth is that it was around for a long time. In fact, in the 1950s, the British mathematician Alan Turing, created the “Turing Test” to test machine intelligence (an evaluator that would ask questions and make small talks).

These days, this is enabled by the exponential growth of 3 components:

1. Algorithms, they were around for decades. For example, if we take Linear Regression (the algorithm used in forecasting models and predictions), it is basically linear algebra.

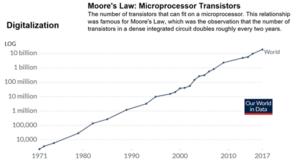

2. Computer Power, enabling this to happen. There is something called “Moore’s law” that observed that the number of transistors in a silicon chip doubles every two years, and hence the capacity to process these complex calculations and make the algorithms work. Moore’s law was a prediction, but it behaves as a perfect empirical law. Today, cloud and high-powered computing accelerated paths to AI readiness2.

3. Data. Created with everything we do, whether we capture it or not. With every click, with every interaction, with every smartphone’s tap. Today we are in the order of the Zettabytes (trillions of gigabytes), and almost all this data was created in the last few years. According to Raconteur, in 2019 we had 500 million tweets sent every day, 294 billion emails sent, 4 petabytes of data created on Facebook, 4 terabytes of data created from each connected car, 65 billion messages sent on WhatsApp, 5 billion searches made3.

The exponential growth of data is generating a data deluge (a gap between the data being created and the capacity to process it), that is why we need intelligent systems to capture, store, filter and consume it. We have 5 Vs of Big Data (Volume, Variety, Velocity, Veracity and Value), but if we don’t have intelligent and automated systems in place, this data is useless.

With these volumes of data growing, enterprises have the opportunity to leverage investments made in data to augment existing decision making with data-driven insights faster than ever with AI. There are a number of areas where AI can be applied, including Machine Learning (models learning from the data), Natural Language Processing (recognizing natural human language to engage directly with a user or a customer), Expert systems (making decisions in real-life situations), Computer Vision (recognizing objects), Speech recognition (conversational and transcribing speech to text and text to speech), Planning and Optimizing systems and Robotics or Cobots (that can see, hear and react using sensors, like humans).

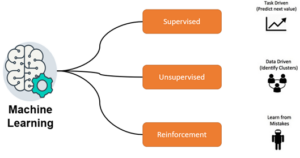

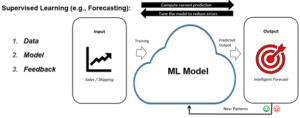

Probably the most popular subfield of AI is Machine Learning, which gives computers the ability to learn from data, without explicitly being programmed to perform a task. We have 3 different types of ML: Supervised Learning (we train the model based on history, using labeled data sets), Unsupervised Learning (it clusters unlabeled data and detects patterns), and Reinforcement Learning (learning through trial and error, with reward and punishment, like we train our pets).

To explain it with an example, if we take any sequence: 2, 4, 6, 8 and we are asked what the next number is, following the sequence, we will probably say 10. This is a good example of Supervised Learning, we mentally trained our model with the data, detected the pattern, and predicted the next value. Human intuition is pattern recognition. So, we have 3 pillars:

- ● Data (the more data, the better the model is). Machine Learning models are hungry, and we need cleaned, unbiased data.

- ● Model (built based on one or many algorithms).

- ● Feedback, when we refine and tune the model measuring the accuracy of our prediction.

Supervised Learning is an AI-based decision-making system that learns from our data, enabling us to focus on the strategy and rely on it for some mundane day-to-day tasks. Even for personal decisions, we know that when Netflix or Spotify recommend something, we probably will not be disappointed. They know everything about us, and when they don’t (e.g., when we are new customers), they used the wisdom of crowds to cross-reference with those customers with similar selection patterns.

The ultimate sophistication of Machine Learning is Deep Learning, using neural networks, mimicking the human brain, with neurons (nodes) interconnected in layers, with each cell processing inputs and producing an output that is sent to other neurons. A Deep Learning model can process vast amount of data and weigh each link in the network to make it more accurate than traditional ML models, but it requires more computing power.

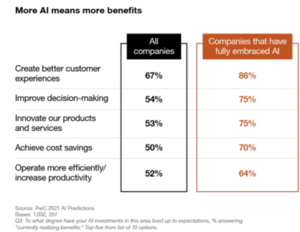

Last year, the COVID-19 pandemic accelerated the adoption of digital technologies, including AI. Artificial intelligence is also helping to fight against COVID in different ways, from synthesizing literature using NLP, to automating processes, reducing the cycles, and finding earlier breakthroughs. In fact, having many vaccines in the market in less than a year was thanks to AI’s enablement (traditionally the R&D process for vaccines would take from 5 to10 years on average). According to a survey from PwC, the US reported widespread adoption of AI, jumping to 25% of companies surveyed, from 18 percent last year4. Another 54 percent are heading there fast, and they’re no longer just laying the foundations. Those investing are reaping rewards from AI right now, in part because it has proven to be a highly effective response to the challenges brought by the COVID-19 crisis. In fact, most of the companies that have fully embraced AI are already reporting major benefits.

We saw many opportunities last year, such as shortening the R&D & manufacturing cycles, and even reducing experimentation with animals. Today we have a vaccine in the streets in less than a year thanks to AI, and we understand there are many opportunities to improve industries (e.g.: healthcare). The United States has the most expensive and inefficient three trillion-dollar healthcare industry in the world with many processes that could be optimized and automated. Research and development is about collecting data, and connecting the dots, and AI is very good for this. If we think about how we collect information and learn, doctors’ work is to collect medical data, analyze it, and give a diagnosis. This is another great candidate for AI. When we see a doctor, if we are lucky, we will see a professional that has already seen many cases like ours and will know what our illness is, and how to treat it and cure it. If not, we’ll be one of their first patients, and they will be trained with our data. Artificial intelligence is not about computers getting faster and smarter and taking jobs. It is about breakthroughs in life sciences as well.

The good news is that AI is gathering momentum, democratizing access to technology and breakthroughs; becoming the most popular specialization among undergrads and grads. The majority of AI graduates are being hired by the tech industry rather than academia, creating an intense competition for talent.

Governments are also developing strategies to lead the AI race. This year, the White House launched a new website to make artificial intelligence more accessible across the U.S.: AI.gov5. People visiting the site can learn how artificial intelligence is being used across the country, for example to respond to the COVID-19 pandemic.

Let’s play Socrates: “The only thing I know is that I know nothing.” BI is good for unveiling the “known unknows”, but there is big blind spot in our operation, the “unknown unknowns”. In other words, BI can help us find answers to historical questions. This is a lot for some companies, but it is reactive and not enough. When we find an answer for a question, it could be late, or the question could have changed. AI came to help us optimize our operation and predict future events proactively, connecting the dots and helping us understand how to overcome the problem of known unknowns and minimize or eliminate blind spots. Yet, one of the biggest challenges with AI is just getting started.

References:

1. Oxford English Dictionary (2021). Oxford University Press.

2. Our World in Data. Moore’s Law: Transistors per microprocessor. https://ourworldindata.org/grapher/transistors-per-microprocessor

3. Raconteur (2019). A day in data infographic. https://www.raconteur.net/infographics/a-day-in-data/

4. Rao, A. & Likens, S. (2021). PwC. AI Predictions 2021. https://www.digitalpulse.pwc.com.au/ai-predictions-2021-report/

5. National Artificial Intelligence Initiative. https://www.ai.gov/